Why Ethical AI in CRM Matters More Than Ever

In 2019, a Goldman Sachs credit card offered through Apple made headlines. Customers noticed that the card’s credit algorithm was assigning dramatically different limits to men and women with nearly identical financial profiles. One software engineer posted about it on social media. His wife had a significantly lower limit, despite sharing assets and having a stronger individual credit score. The thread went viral, and the New York Department of Financial Services launched an investigation.

Goldman Sachs stated that gender was not a variable in the model. They were technically correct. The algorithm did not directly evaluate sex. Instead, it relied on purchase history, account types, and spending patterns – variables that reflected decades of systemic financial inequality between men and women. The AI model learned biased patterns from historical data, and no one identified the issue before deployment.

This is a pattern that plays out in CRM systems every day, at companies of every size, often unintentionally and without anyone realizing it until the damage is already done.

As businesses increasingly adopt AI-powered CRM platforms like Salesforce Einstein, responsible AI governance is becoming essential for compliance, customer trust, and long-term business growth.

What Bias in CRM Systems Actually Looks Like

In CRM systems, bias is often less visible but significantly more consequential.

An Einstein Lead Scoring model trained on three years of historical sales data will accurately learn which types of prospects your team has historically converted. The problem is that historical conversion data reflects which prospects your team pursued, how persistently they followed up, and which accounts received the most attention from senior sales representatives.

If those patterns were uneven, the AI model will encode that focus as a proxy for quality. Accounts that resemble historical wins receive high scores, while accounts outside those patterns receive lower scores and less outreach.

The bias comes from historical CRM data shaped by human decisions, some of which may themselves have been biased.

AI Bias in Customer Service and Case Management

In customer service, the same issue appears differently.

A case prioritization model trained on historical resolution data may learn that enterprise accounts get resolved faster because support teams historically prioritized them. The AI then reinforces that pattern automatically. Smaller accounts wait longer, customer satisfaction drops, and churn increases.

According to Stanford’s 2025 AI Index Report, leading AI systems still demonstrate biases that reinforce existing stereotypes. The report also highlighted that AI transparency and governance standards remain below the levels required for major regulations such as GDPR and the EU AI Act.

The EU AI Act Changed AI Governance Forever

For years, responsible AI was mostly treated as a reputational concern. Companies deploying biased AI models faced criticism and occasional regulatory scrutiny.

The EU AI Act, which came fully into effect in August 2025, transformed AI ethics into a legal and compliance requirement.

The Act establishes a risk-based framework for AI governance. AI systems affecting access to credit, employment, insurance, healthcare, or essential services are classified as high-risk systems.

These systems now require:

- Pre-deployment conformity assessments

- AI risk management processes

- Regular bias audits

- Human oversight mechanisms

- AI transparency and disclosure standards

- Ongoing monitoring and governance documentation

For companies selling into the EU or handling EU customer data through CRM platforms, these requirements are no longer optional.

Salesforce Einstein and High-Risk AI Systems

Salesforce Einstein Lead Scoring used in financial services may qualify as a high-risk AI application under the EU AI Act. AI-powered case prioritization in healthcare environments may also fall into the same category.

Even churn prediction models can create regulatory exposure if they systematically disadvantage specific customer groups.

In the United States, regulatory pressure is also increasing:

- The EEOC has confirmed employers are liable for discriminatory outcomes from AI hiring tools

- The FTC has issued guidance around AI in credit and insurance decisions

- States including California, Colorado, Texas, and Illinois are introducing AI governance regulations

The legal and operational risks of deploying unchecked AI in CRM systems are no longer theoretical.

Responsible AI Deployment Does Not Have to Slow Down Innovation

One of the biggest misconceptions about AI ethics frameworks is that governance slows down innovation.

In reality, modern AI governance can be embedded directly into CRM architecture.

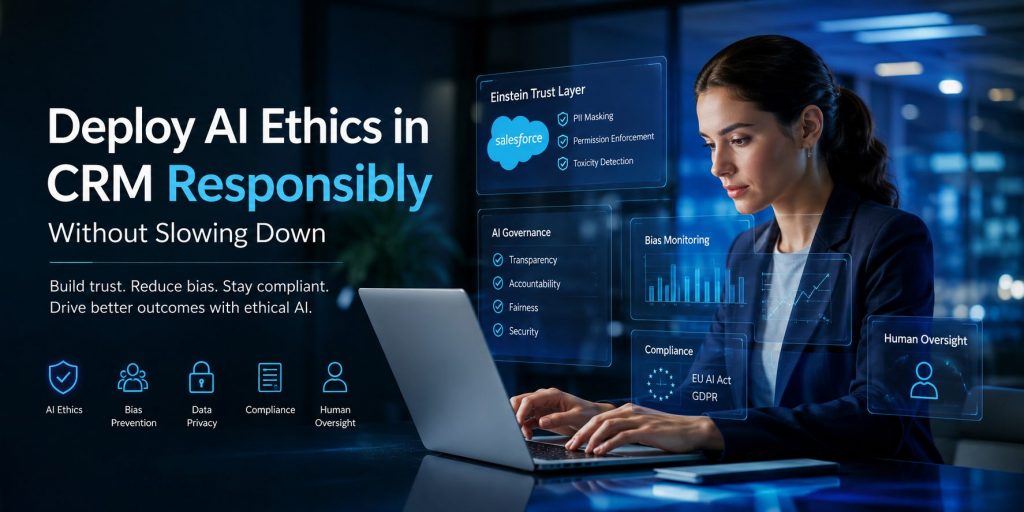

Salesforce addressed this challenge through the Einstein Trust Layer.

How the Salesforce Einstein Trust Layer Supports Responsible AI

The Einstein Trust Layer operates automatically within Salesforce AI workflows.

When prompts are sent from Salesforce users to external large language models (LLMs), the Trust Layer automatically masks personally identifiable information (PII) before the data leaves the system.

Customer names, email addresses, phone numbers, and financial data are replaced with anonymized placeholders. The LLM never sees the original sensitive data.

Once the model generates a response, Salesforce unmasks the placeholders so users receive personalized outputs without exposing raw customer information externally.

The Trust Layer also enforces existing Salesforce permission settings in every AI interaction.

If a support agent does not normally have access to a customer’s billing history, the AI assistant cannot access it either.

This architecture helps organizations:

- Maintain CRM data governance

- Reduce compliance risks

- Improve AI security and privacy

- Accelerate responsible AI deployment

- Support GDPR and EU AI Act readiness

Governance becomes part of the infrastructure rather than a separate operational bottleneck.

Five Practical Steps for Ethical AI Deployment in CRM

Responsible AI implementation in CRM systems does not require months of review cycles. It requires a consistent governance process.

1. Audit CRM Data Before Training AI Models

Before training AI models on historical CRM data, conduct a distribution analysis.

Review:

- Which industries are represented

- Which customer segments dominate win data

- Geographic distribution patterns

- Account size representation

- Historical engagement gaps

This helps identify bias risks before the model is deployed.

2. Define Fairness Metrics Alongside Performance Metrics

CRM AI success should not be measured only by conversion rates or pipeline velocity.

Organizations should also evaluate:

- Fairness across customer segments

- Consistency across geographies

- Equal opportunity scoring patterns

- Bias indicators in recommendations

AI performance metrics should balance business outcomes with equitable outcomes.

3. Add Human Oversight for High-Stakes AI Decisions

The Einstein Trust Layer secures data automatically, but human oversight still matters.

Organizations should identify CRM workflows where AI recommendations meaningfully impact customers or prospects and ensure regular human review.

Examples include:

- Lead prioritization

- Loan eligibility recommendations

- Retention offer decisions

- Customer escalation prioritization

4. Create AI Model Documentation and Model Cards

Every AI model should have clear documentation before deployment.

A model card should explain:

- What the model does

- What data it was trained on

- What use cases it supports

- What it should not be used for

- How it will be monitored over time

Salesforce already publishes model cards for Einstein features, and businesses should adopt the same best practice internally.

5. Establish Ongoing AI Monitoring and Review Cycles

AI models degrade over time.

Customer behavior changes, market conditions shift, and CRM data evolves.

Organizations should schedule regular reviews to:

- Compare predictions against actual outcomes

- Identify drift in model accuracy

- Reassess fairness metrics

- Update training datasets

- Maintain regulatory compliance

Continuous AI monitoring is essential for long-term responsible AI governance.

Why Businesses Choose Team Sarla for Responsible Salesforce AI Deployment

Sarla Consulting helps organizations implement Salesforce AI features with governance, compliance, and responsible AI principles built into the process from day one.

We work with businesses across financial services, healthcare, nonprofits, and retail — industries where AI governance, compliance, and ethical decision-making are business-critical.

Our Approach to Responsible AI in CRM

| What We Do | What It Addresses | Outcome |

|---|---|---|

| Pre-deployment data audit for AI readiness and bias risk | Identifies distribution gaps, historical bias, and CRM data quality issues before a model learns from them | Models start from a fairer foundation with documented bias risks before go-live |

| Einstein Trust Layer configuration and review | Confirms PII masking, permission enforcement, and toxicity detection settings are correctly configured | AI operates within existing Salesforce data governance standards |

| AI governance framework design | Defines ownership, review cycles, escalation paths, and accountability for AI decisions | Creates structured governance instead of isolated policy documents |

| Model card and documentation templates | Documents model purpose, training data, limitations, and monitoring standards | Improves transparency and audit readiness |

| Fairness metric design alongside performance metrics | Adds fairness evaluation criteria to AI model assessments | AI models are measured against equitable outcomes, not just revenue metrics |

| EU AI Act and regulatory readiness assessment | Reviews Salesforce AI deployments against EU AI Act and US regulatory requirements | Identifies compliance exposure and remediation priorities |

| Post-launch monitoring and managed services | Ongoing monitoring, retraining support, and incident response management | Keeps AI systems accurate, fair, and compliant over time |

Final Thoughts: Ethical AI in CRM Is a Competitive Advantage

Responsible AI deployment in CRM is no longer just about avoiding reputational damage.

It is now directly connected to:

- Regulatory compliance

- Customer trust

- AI governance readiness

- Data security and privacy

- Long-term business scalability

Organizations that build ethical AI practices into their CRM strategy early will move faster, reduce risk, and create stronger customer relationships over time.

With platforms like Salesforce Einstein and governance frameworks like the Einstein Trust Layer, businesses can deploy AI responsibly without sacrificing speed or innovation.